this post was submitted on 12 Jun 2024

27 points (100.0% liked)

Programming

16752 readers

234 users here now

Welcome to the main community in programming.dev! Feel free to post anything relating to programming here!

Cross posting is strongly encouraged in the instance. If you feel your post or another person's post makes sense in another community cross post into it.

Hope you enjoy the instance!

Rules

Rules

- Follow the programming.dev instance rules

- Keep content related to programming in some way

- If you're posting long videos try to add in some form of tldr for those who don't want to watch videos

Wormhole

Follow the wormhole through a path of communities [email protected]

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

A couple of ideas:

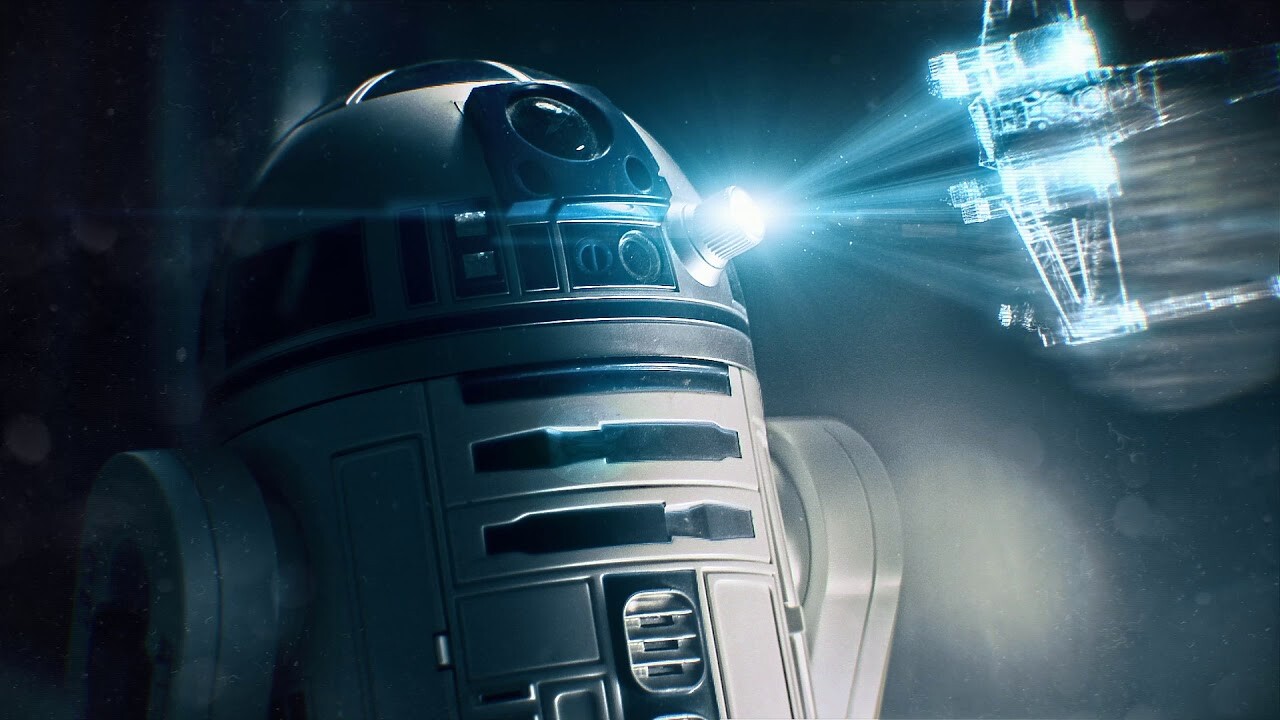

Encoding holograms

Decoding holograms:

Last thought

Holography is often used to record information from the real world, and in that process it's impossible to record the light's phase during the encode step. Physicist's call it "the phase problem" and there are all kinds of fancy tricks to try to get around it when decoding holograms in the computer. If you're simulating everything from scratch then you have the luxury of recording the phase as well as the amplitude - and this should make decoding much easier as a result!

Thanks! Finally something concrete. Once I return to this to write a POC I'll revisit your tips here.