this post was submitted on 30 Nov 2024

372 points (98.2% liked)

Programmer Humor

32702 readers

527 users here now

Post funny things about programming here! (Or just rant about your favourite programming language.)

Rules:

- Posts must be relevant to programming, programmers, or computer science.

- No NSFW content.

- Jokes must be in good taste. No hate speech, bigotry, etc.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

Wait a second. When did I say abstraction was bad? It's needed now. But when you are comparing 8bit machine code written for specific hardware against modern programming where you MUST handle multiple x86/x86_x64 cpus, multiple hardware combinations (either via the exe or by the libraries that must handle the abstraction) of course there is an overhead. If you want to tell me there's no overhead then I'm going to tell you where to go right now.

It's a necessary evil we must have in the modern world. I feel like the people hating on what I say are misunderstanding the point I make. The point is WHY we cannot compare these two things!

I didn't say that you said abstraction was bad. What I said was that you're conflating abstraction and inefficiency. These are two separate things. There is a certain amount of overhead involved in abstractions, but claiming that majority of the overhead we have today is solely due to the need for abstractions is unfounded.

Seems to me that you're aggressively misunderstanding the point I'm making. To clarify, my point is that the layers of shit that constitute modern software stacks are not necessary to provide the types abstractions we want to have. They are an artifact of the fact that software grows through accretion, and there's need for backwards compatibility.

If somebody was designing a system from scratch today it could both provide much better abstractions than we have and be far more efficient. The reason we're not doing that is because the effort of implementing everything from scratch would be phenomenal. So, we're stuck with taking older systems and building on top of them instead.

And on top of that, the dynamics of software development tend to favor building stuff that's just good enough. As long as people are willing to use the software then it's a success. There's very little financial incentive to keep optimizing it past that point.

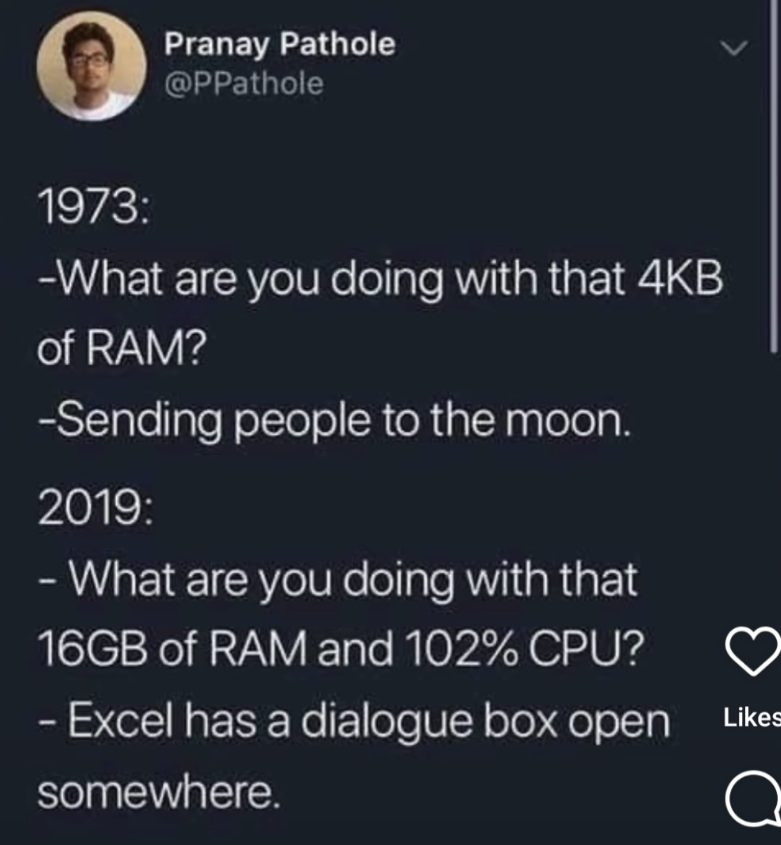

OK, look back at the original picture this thread is based on.

We have two situations.

The first is a dedicated system for providing navigation and other subsystems for a very specific purpose, with very specific hardware that is very limited. An 8 bit CPU with a very clearly known RISCesque instruction set, 4kb of ram and an bus to connect devices.

The second is a modern computer system with unknown hardware, one of many CPUs offering the same instruction set, but with differing extensions, a lot of memory attached.

You are going to write software very differently for these two systems. You cannot realistically abstract on the first system, in reality you can't even use libraries directly. Maybe you can borrow code from a library at best. On the second system you MUST abstract because, you don't know if the target system will run an Intel or Amd CPU, what the GPU might be, what other hardware is in place, etc etc.

And this is why my original comment was saying, you just cannot compare these systems. One MUST use abstraction, the other must not. And abstractions DO produce overhead (which is an inefficiency). But we NEED that and it's not a bad thing.

The original picture is a bit of humor that you're reading way too much into. All it's saying is that we're using computing resources incredibly inefficiently, which is undeniably the case. Of course, you can't seriously make a direct comparison between the two scenarios. Everybody understands that.