this post was submitted on 21 Aug 2023

3181 points (98.3% liked)

Asklemmy

43947 readers

833 users here now

A loosely moderated place to ask open-ended questions

If your post meets the following criteria, it's welcome here!

- Open-ended question

- Not offensive: at this point, we do not have the bandwidth to moderate overtly political discussions. Assume best intent and be excellent to each other.

- Not regarding using or support for Lemmy: context, see the list of support communities and tools for finding communities below

- Not ad nauseam inducing: please make sure it is a question that would be new to most members

- An actual topic of discussion

Looking for support?

Looking for a community?

- Lemmyverse: community search

- sub.rehab: maps old subreddits to fediverse options, marks official as such

- [email protected]: a community for finding communities

~Icon~ ~by~ ~@Double_[email protected]~

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

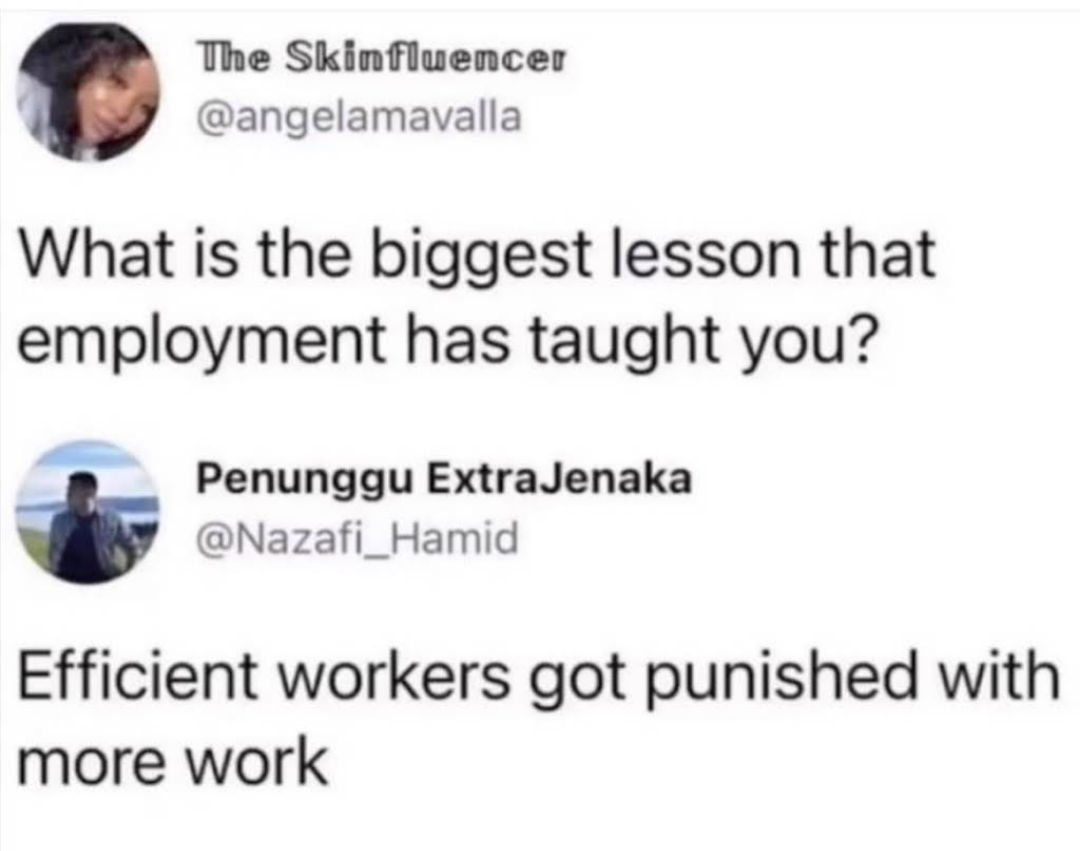

That's why you never tell anyone.

“I’ve automated my slack communications using GPT-4. Let’s see if anyone notices”

Classic Carl

Gotta do the freegpt and train it on your files, folders and messages.

If I understand LLMs right, they have a maximum prompt length, but can be trained on any amount of text data.

The only way to add knowledge that doesn’t fit into a prompt, is to put it in the training data then re-train.

But, you could describe some sort of algorithm that it can use to sleuth out data using API calls, and it would then have access to lots more up-to-date data than can fit in a prompt. Except the body of the response would all have to become part of a prompt.

But the whole dataset it has access to doesn’t have to be mentioned in the conversation, so doesn’t have to be part of the prompt. Ultimately you don’t want your AI assistant telling you everything it knows in each interaction, just to access some slice of your data world, make changes to it, then eventually get you an answer or a report.

What is FreeGPT by the way?

I'll try to get the actual name and repo since i want to leverage it. It's basically a reverse engineered chatgpt that is open source.

But yeah, i think the idea is you have prompts trigger the API call to get the additional data.

I didn't, but I also wasn't sneaky enough.