this post was submitted on 24 Jul 2022

57 points (95.2% liked)

Programmer Humor

32743 readers

557 users here now

Post funny things about programming here! (Or just rant about your favourite programming language.)

Rules:

- Posts must be relevant to programming, programmers, or computer science.

- No NSFW content.

- Jokes must be in good taste. No hate speech, bigotry, etc.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

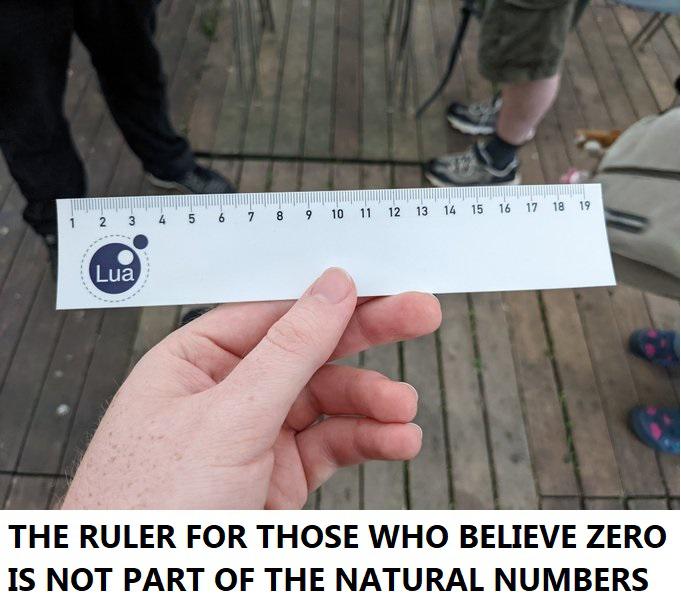

Idk if you are trolling, but in most cases 0 is considered a part of the natural numbers. And there is a huge difference between the naturals and the integers: the naturals are for induction, the integers are for algebra.

Depends on where you are! In some places it is more common to say that 0 is natural and in other's not. Some argue it's useful to have it N, some, say that it makes more historical and logical sense for 0 not to be in N and use N_0 when including it. It's not a settled issue, it's a matter of perspective.

I guess it depends on the place. But the arguments for not including seem futile, when

Of course 0 vs no 0 only matters if you actually do arithmetic with it. If you only index you could just as well start with 5.

(The only reasons I can think of to start at 1 is that 1 is the 1-st element then and the sequence (1/n) is defined for all natural n)

Those are valid points and make some practical sense, but I've talked too much with mathematicians about this so let me give you another point of view.

First of all, we do modular arithmetic with integers, not natural numbers, same with all those objects you listed.

On the first point, we are not talking about 0 as a digit but as a number. The main argument against 0 being in N is more a philosophical one. What are we looking at when we study N? What is this set? "The integers starting from 0" seems a bit of a weird definition. Historically, the natural numbers always were the counting numbers, and that doesn't include 0 because you can't have 0 apples, so when we talk about N we're talking about the counting numbers. That's just the consensus where I'm from, if it's more practical to include 0 in whatever you are doing, you use N~0~. Also the axiomatization of N is more natural that way IMO.

You are talking to one right now :) (not sure if a bachelors degree is enough to call yourself one)

You can actually. In fact, right at this moment I have 0 apples. If 0 is not natural, then you have no way of describing the number of apples I have.

There are a lot of concepts (degree of a polynomial, dimension of a space, cardinality of a set, in a graph) where 0 is a natural possibility.

So I think 1 indexing is fine, I use it all the time, but to me 0 belongs with the natutals. I will say tho, that 0 does not make sense to me as an ordinal. "He finished the race in 0-th place"????