If nothing else, you've definitely stopped me forever from thinking of jq as sql for json. Depending on how much I hate myself by next year I think I might give kusto a shot for AOC '25

Architeuthis

22-2 commentary

I got a different solution than the one given on the site for the example data, the sequence starting with 2 did not yield the expected solution pattern at all, and the one I actually got gave more bananas anyway.

The algorithm gave the correct result for the actual puzzle data though, so I'm leaving it well alone.

Also the problem had a strong map/reduce vibe so I started out with the sequence generation and subsequent transformations parallelized already from pt1, but ultimately it wasn't that intensive a problem.

Toddler's sick (but getting better!) so I've been falling behind, oh well. Doubt I'll be doing 24 & 25 on their release days either as the off-days and festivities start kicking in.

I mean, you could have answered by naming one fabled new ability LLM's suddenly 'gained' instead of being a smarmy tadpole, but you didn't.

What new AI abilities, LLMs aren't pokemon.

Slate Scott just wrote about a billion words of extra rigorous prompt-anthropomorphizing fanfiction on the subject of the paper, he called the article When Claude Fights Back.

Can't help but wonder if he's just a critihype enabling useful idiot who refuses to know better or if he's being purposefully dishonest to proselytize people into his brand of AI doomerism and EA, or if the difference is meaningful.

edit: The claude syllogistic scratchpad also makes an appearance, it's that thing where we pretend that they have a module that gives you access to the LLM's inner monologue complete with privacy settings, instead of just recording the result of someone prompting a variation of "So what were you thinking when you wrote so and so, remember no one can read what you reply here". Que a bunch of people in the comments moving straight into wondering if Claude has qualia.

Rationalist debatelord org Rootclaim, who in early 2024 lost a $100K bet by failing to defend covid lab leak theory against a random ACX commenter, will now debate millionaire covid vaccine truther Steve Kirsch on whether covid vaccines killed more people than they saved, the loser gives up $1M.

One would assume this to be a slam dunk, but then again one would assume the people who founded an entire organization about establishing ground truths via rationalist debate would actually be good at rationally debating.

It's useful insofar as you can accommodate its fundamental flaw of randomly making stuff the fuck up, say by having a qualified expert constantly combing its output instead of doing original work, and don't mind putting your name on low quality derivative slop in the first place.

16 commentary

DFS (it's all dfs all the time now, this is my life now, thanks AOC) pruned by unless-I-ever-passed-through-here-with-a-smaller-score-before worked well enough for Pt1. In Pt2 in order to get all the paths I only had to loosen the filter by a) not pruning for equal scores and b) only prune if the direction also matched.

Pt2 was easier for me because while at first it took me a bit to land on lifting stuff from Djikstra's algo to solve the challenge maze before the sun turns supernova, as I tend to store the paths for debugging anyway it was trivial to group them by score and count by distinct tiles.

And all that stuff just turned out to be true

Literally what stuff, that AI would get somewhat better as technology progresses?

I seem to remember Yud specifically wasn't that impressed with machine learning and thought so-called AGI would come about through ELIZA type AIs.

In every RAG guide I've seen, the suggested system prompts always tended to include some more dignified variation of "Please for the love of god only and exclusively use the contents of the retrieved text to answer the user's question, I am literally on my knees begging you."

Also, if reddit is any indication, a lot of people actually think that's all it takes and that the hallucination stuff is just people using LLMs wrong. I mean, it would be insane to pour so much money into something so obviously fundamentally flawed, right?

Pt2 commentary

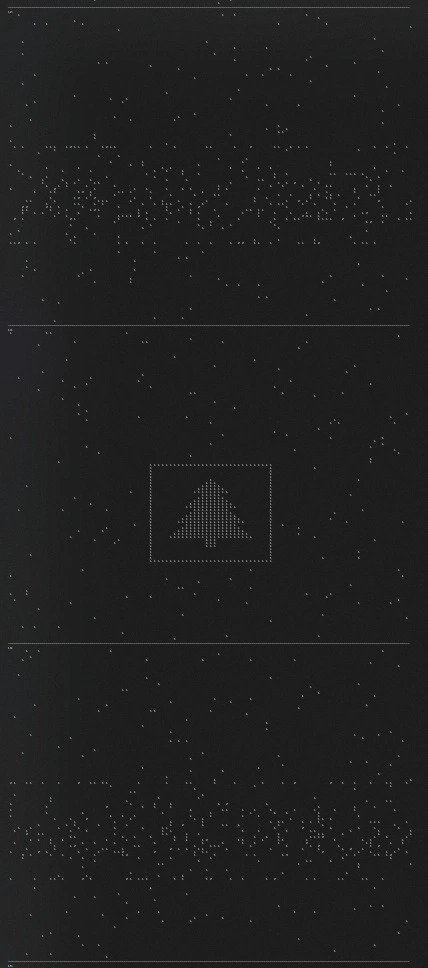

I randomly got it by sorting for the most robots in the bottom left quadrant while looking for robot concentrations, it was number 13. Despite being in the centre of the grid it didn't show up when sorting for most robots in the middle 30% columns of the screen, which is kind of wicked, in the traditional sense.

The first things I tried was looking for horizontal symmetry (find a grid where all the lines have the same number of robots on the left and on the right of the middle axis, there is none, and the tree is about a third to a quarted of the matrix on each side) and looking for grids where the number of robots increased towards the bottom of the image (didn't work, because turns out tree is in the middle of the screen).

I thinks I was on the right track with looking for concentrations of robots, wish I'd thought about ranking the matrices according to the amount of robots lined up without gaps. Don't know about minimizing the safety score, sorting according to that didn't show the tree anywhere near the first tens.

Realizing that the patterns start recycling at ~10.000 iterations simplified things considerably.

The tree on the terminal output

(This is three matrices separated by rows of underscores)

23-2

Leaving something to run for 20-30 minutes expecting nothing and actually getting a valid and correct result: new positive feeling unlocked.Now to find out how I was ideally supposed to solve it.