I was pleasantly surprised by many models of the Deepseek family. Verbose, but in a good way? At least that was my experience. Love to see it mentioned here.

I appreciate this comment more than you will know. Thanks for sharing your thoughts.

It’s been a challenge realizing this time capsule is more than that - but a grassroots community and open-source project bigger than me. Adjusting the content to reflect shared interests has been a concept I have grappled with these last few weeks - especially as we exit some of the exciting innovations we saw earlier this year.

I think the type of content series you mention is the next step here - that being practical and pragmatic insights that illustrate / enable new workflows and applications.

That being said, this type of content creation will likely take more time than the journalistic reporting I’ve been doing - but I think it’s absolutely worth the effort and the next logical evolution of whatever this forum becomes.

Thanks again for your kind words. I work 5/6 day weeks in my tech job on top of this, so burnout is a real thing. I think I’ll go for a hike this week and reevaluate how to best proliferate and spread FOSAI.

If you’re reading this now and have ideas of your own - I’m all ears.

This is on the horizon - I will definitely be making a post on the workflow and process once it is figured out.

I am actively exploring this question.

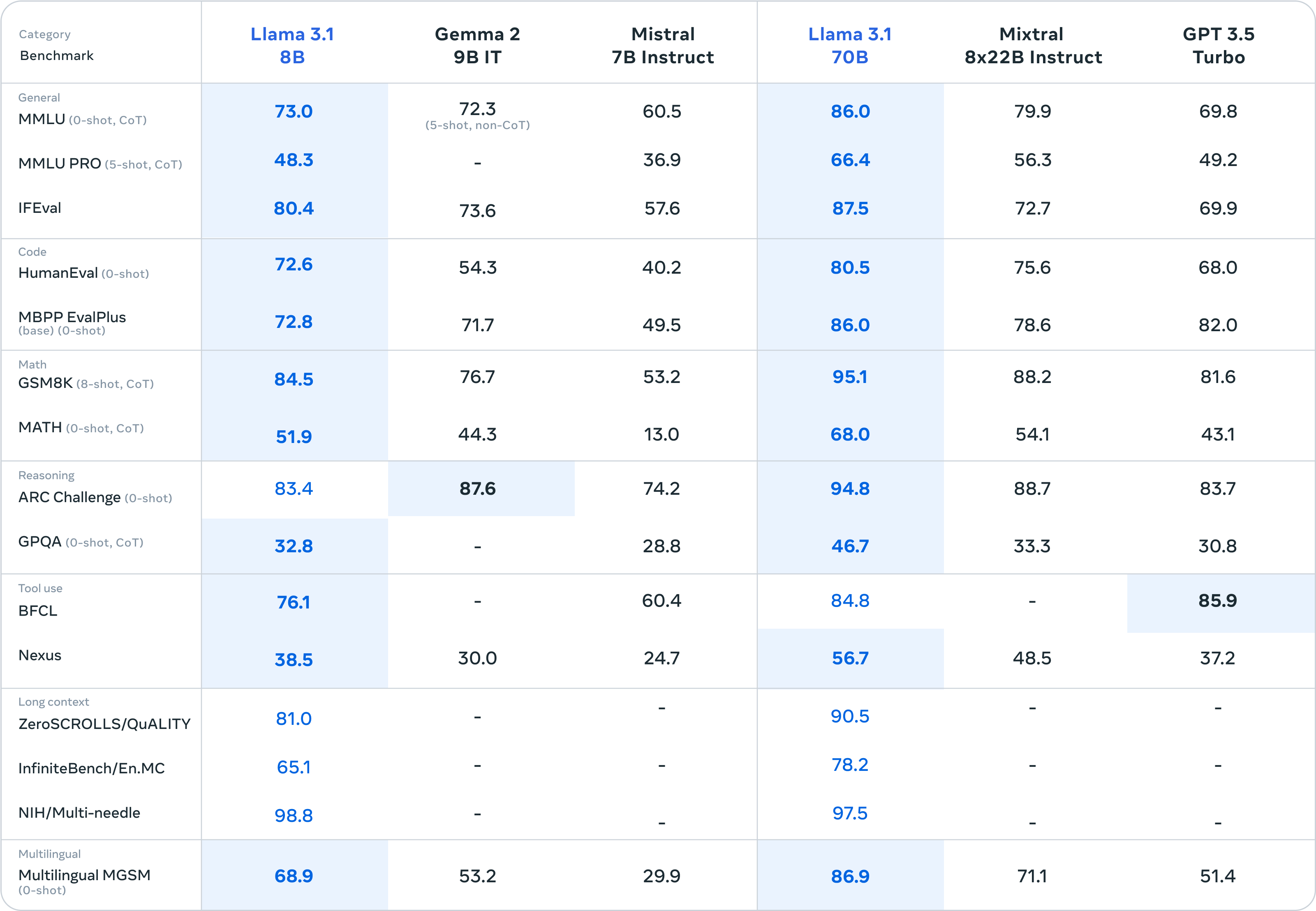

So far - it’s been the best performing 7B model I’ve been able to get my hands on. Anyone running consumer hardware could get a GGUF version running on almost any dedicated GPU/CPU combo.

I am a firm believer there is more performance and better quality of responses to be found in smaller parameter models. Not too mention interesting use cases you could apply fine-tuning an ensemble approach.

A lot of people sleep on 7B, but I think Mistral is a little different - there’s a lot of exploring to be had finding these use cases but I think they’re out there waiting to be discovered.

I’ll definitely report back on how the first attempt at fine-tuning this myself goes. Until then, I suppose it would be great for any roleplay or basic chat interaction. Given it’s low headroom - it’s much more lightweight to prototype with outside of the other families and model sizes.

If anyone else has a particular use case for 7B models - let us know here. Curious to know what others are doing with smaller params.

What I find interesting is how useful these tools are (even with the imperfections that you mention). Imagine a world where this level of intelligence has a consistent low error rate.

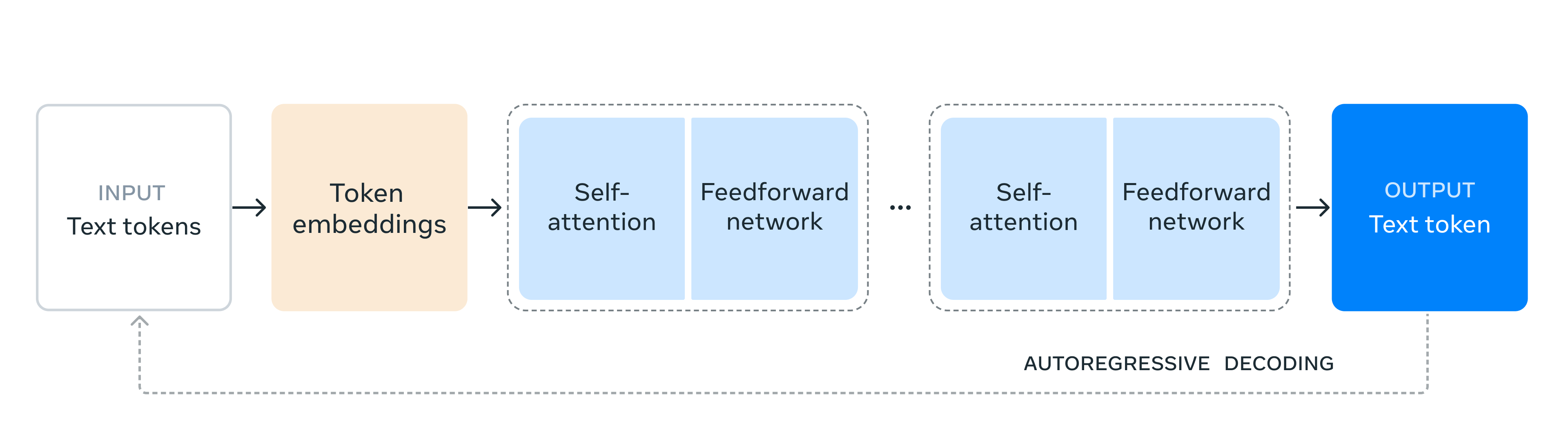

Semantic computation and agentic function calling with this level of accuracy will revolutionize the world. It’s only a matter of time, adoption, and availability.

I respect your honesty.

Google has absolutely tanked for me these last few years. It revolutionized the world by revolutionizing search. But ChatGPT has done the same, now better - and in a much more interesting way.

I’ll take a 10 second prompt process over 20 minutes of hunting down (advertised) paged results any day of the week.

I have learned everything I have about AI through AI mentors.

Having the ability to ask endless amounts of seemingly stupid questions does a lot for me.

Not to mention some of the analogies and abstractions you can utilize to build your own learning process.

I’d love to see schools start embracing the power of personalized mentors for each and every student. I think some of the first universities to embrace this methodology will produce some incredible minds.

You should try fine-tuning that legalese model! I know I’d use it. Could be a great business idea or generally helpful for anyone you release it to.

I cannot understate how nice it is having a coding assistant 24/7.

I’m curious to see how projects like ChatDev evolve over time. I think agentic tooling is going to take us to some very sci-fi looking territory.

Semantic computation is the future.

I never considered 8 - 11. Those are really interesting use cases. I’m with you on every other point. I’m particularly interested in solving the messy unstructured notes scenario. I really feel you on that one. I’ll see what I can do!

What I find particularly exciting is that we’re seeing this evolution in real-time.

Can you imagine what these models might look like in 2 years? 5? 10?

There is a remarkable future on the horizon. I hope everyone gets an equal chance to be a part of it.

What sort of tokens per second are you seeing with your hardware? Mind sharing some notes on what you're running there? Super curious!