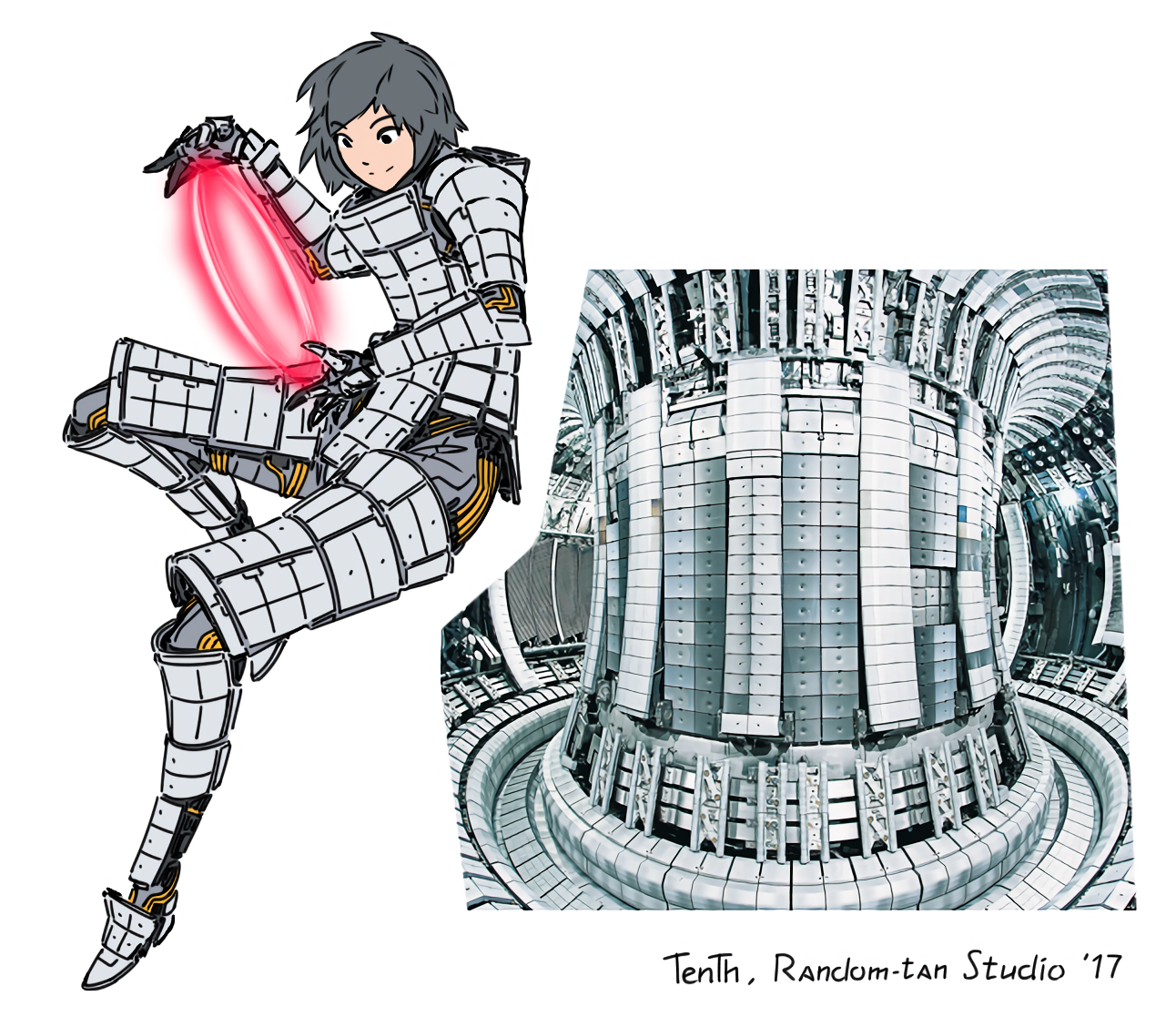

Very creative with the various black protrusions.

ChaoticNeutralCzech

Lethal humanoid monsters, weird voice acting (likely not AI though) and "telephone"-distorted audio (it's not just because I limited the bitrate to 20 kb/s to fit under 10 MiB, the YouTube video is like that). It's an artistic choice but not a very rare one, so likely not directly inspired by H. P. Lovecraft's audiobooks.

I'll show you some superior weaponry. Today's post won't be automated.

You are right, QR codes are very easy to decode if you have them raw, even the C64 should do it in a few seconds, maybe a minute for one of those 22 giant ones. The hard part is image processing when decoding a camera picture - and that can be done on the C64 too if it has enough time and some external memory (or disks for virtual memory). People have even emulated a 32-bit RISC processor on the poor thing, and made it boot Linux.

Some of them use bismuth, which is as weakly radioactive as it gets, but why? It's still a heavy metal and might be poisonous if parts of it shed off.

Yeah, I'm using Joplin over Nextcloud and it would absolutely be compatible, the Markdown syntax is the same after all.

I wouldn't say "shit" but rather niche. Most people who would love a Reddit-like place have Reddit and don't hate it enough to switch, especially since we don't have extensive hobby communities with long history.

In almost all microwaves, the control circuitry or mechanical switches only ever switch 2-3 power circuits: motor+fan(+bulb sometimes separately) and the heating (transformer+diode+capacitor+magnetron) high voltage circuit. It can therefore only switch the heat between 0 and max, usually in a slow (15-30s period) PWM cycle (that hopefully does not coincide with the tray rotation period). The inputs can be manual only, or sometimes there is also a scale, moisture sensor and microphone, along with thermal fuses for safety.

I think the pizza setting is just generic medium one with short 50% cycles to allow the heat to spread. The popcorn setting can be much more interesting:

https://www.youtube.com/watch?v=Limpr1L8Pss

If it's a joke, the website is way too committed to the bit. They appear to also have less ridiculous articles with no obvious signs of satire, host Sunday and Friday service, sell books etc. The "New Month" is probably just something to fill their WordPress template's calendar widget that they never figured out how to delete.

There is just one picture... Show me a screenshot if you see two.