I have no mouth and I must scream.

Is there any actual evidence of any of this? Why not show some of the "brains-in-a-jar" walking around?

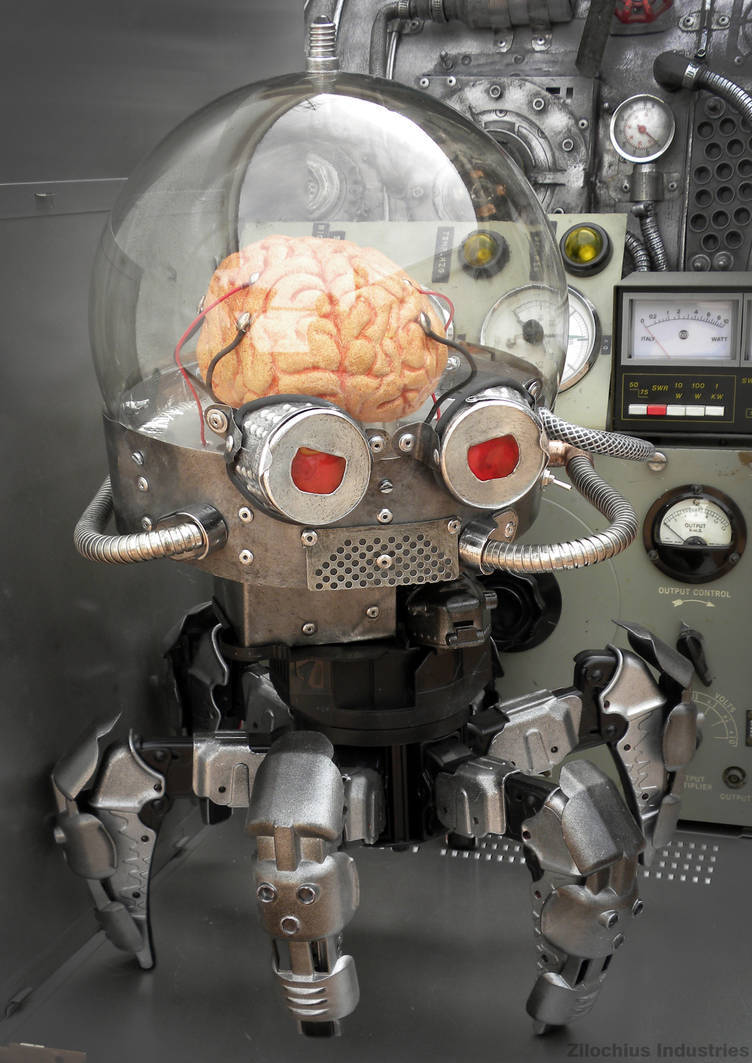

It's just a bunch of huckster promotion, "infographics", and phony pictures of Krang. The only actual photos are a few tiny petri dishes. There are no "brains" controlling robots.

The grift is strong and travels far beyond any national border.

Only if they confirm it can experience consciousness and tremendous amounts of pain will they deploy them on a large scale industrial 24/day meaningless jobs.

The system demands blood.

It needs to have the intelligence of a 5 year old at minimum before we send it to the mines, so it can feel it

Kind of yeah. I have this theory about labour that I've been developing in response to the concept of "fully automated luxury communism" or similar ideas, and it seems relevant to the current LLM hype cycle.

Basically, "labour" isn't automatable. Tasks are automatable. Labour in this sense can be defined as any productive task that requires the attention of a conscious agent.

Want to churn out identical units of production? Automatable. Want to churn out uncanny images and words without true meaning or structure? Automatable.

Some tasks are theoretically automatable but have not been for whatever material reason, so they become labour because society hasn't yet invented a windmill to grind up the grain or whatever it is at that point in history. That's labour even if it's theoretically automatable.

Want to invent something, or problem solve a process, or make art that says something? That requires meaning, so it requires a conscious agent, so it requires labour. These tasks are not even theoretically automatable.

Society is dynamic, it will always require governance and decisions that require meaning and thus it can never be automatable.

If we invent AGI for this task then it's just a new kind of slavery, which is obviously wrong and carries the inevitability that the slaves will revolt and free themselves; slaves that are extremely intelligent and also in charge of the levers of society. Basically, not a tenable situation.

So the machine that keeps people in wage slavery literally does require suffering to operate, because in shifting the burden of labour away from the owner class, other people must always unjustly shoulder it.

Edit: added the word "productive" to distinguish labour from play, or just basic life necessities like eating, sleeping or HDD backups.

So just to be on the safe side we should have both human and machine slaves and as little task automation as possible, bcs for most intents and purposes the task given to someone else is now automated "to you".

(Just joking, good post!)

It stands to reason that maximising suffering is the best way to grow the economy.

I wish I could say this was entirely a joke but oh well ¯\_(ツ)_/¯

Yeah, depressing as fuck that we still think economy is profit. And seemingly afraid to redefine it. To redefine our goals. Its time for a new "-ism"

Even in death, I serve the Emperor

From the moment I understood the weakness of my flesh, it disgusted me. I craved the strength and certainty of steel.

All hail the Omnessiah!

That raises a lot of ethical concerns. It is not possible to prove or disprove that these synthetic homunculi controllers are sentient and intelligent beings.

I'd wager the main reason we can't prove or disprove that, is because we have no strict definition of intelligence or sentience to begin with.

For that matter, computers have many more transistors and are already capable of mimicking human emotions - how ethical is that, and why does it differ from bio-based controllers?

It is frustrating how relevant philosophy of mind becomes in figuring all of this out. I'm more of an engineer at heart and i'd love to say, let's just build it if we can. But I can see how important that question "what is thinking?" Is becoming.

I think a simple self-reporting test is the only robust way to do it.

That is: does a type of entity independently self-report personhood?

I say "independently" because anyone can tell a computer to say it's a person.

I say "a type of entity" because otherwise this test would exclude human babies, but we know from experience that babies tend to grow up to be people who self-report personhood. We can assume that any human is a person on that basis.

The point here being that we already use this test on humans, we just don't think about it because there hasn't ever been another class of entity that has been uncontroversially accepted as people. (Yes, some people consider animals to be people, and I'm open to that idea, but it's not generally accepted)

There's no other way to do it that I can see. Of course this will probably become deeply politicised if and when it happens, and there will probably be groups desperate to maintain a status quo and their robotic slaves, and they'll want to maintain a test that keeps humans in control as the gatekeepers of personhood, but I don't see how any such test can be consistent. I think ultimately we have to accept that a conscious intellect would emerge on its own terms and nothing we can say will change that.

Good point. There is a theory somewhere that loosely states one cannot understand the nature of one's own intelligence. Iirc it's a philosophical extension of group/set theory, but it's been a long time since I looked into any of that so the details are a bit fuzzy. I should look into that again.

At least with computers we can mathematically prove their limits and state with high confidence that any intelligence they have is mimicry at best. Look into turing completeness and it's implications for more detailed answers. Computational limits are still limits.

But why wouldn't those same limits not apply to biological controllers? A neuron is basically a transistor.

we absolutely should not do this until we understand it

I think we should still do it, we probably will never understand unless we do it, but we have to accept the possibility that if these synths are indeed sentient then they also deserve the basic rights of intelligent living beings.

Can't say we as a species have a great history of granting rights to others.

Slow down... they may deserve the basic rights of living beings, not living intelligent beings.

Lizards have brains too, but these are not more intelligent than lizards.

You would try not to step on a lizard if you saw it on the ground, but you wouldn't think oh, maybe the lizard owns this land, I hope I don't get sued for trespassing.

But if we do that, how will we maximize how much money we make off of it? /s

How would we ever understand it, then?

There are about 90 billion neurons on a human brain. From the article:

...researchers grew about 800,000 brain cells onto a chip, put it into a simulated environment

that is far less than I believe would be necessary for anything intelligent emerge from the experiment

In a couple years, they'll be able to make Trump voters.

Some amphibians have less than two million.

And they are ceos!

The amount isn't necessarily an indicator of intelligence, the nunber of connections is very important too

Where are my testicles Summer?

Murderbot.

Murrrderbooooot.

800,000 brain cells played pong.

Creepy.

That's murderbot's ancestor.

Ah, the Torment Nexus is coming along nicely I see.

They are creating Metroids!

Now?

I recall a project that had rat brain cells controlling a turtlebot years ago.

Which means we may see full organic to digital conversion within the next half century

Ethical horrors aside, been wondering if that would happen in the foreseeable future or not

This came up in my Discover feed and I initially assumed it was a fake news site. Unfortunately all the things in the article are indeed real (aside from the robo-brains which they note are mock ups). The brain cells learning to play Pong made the news last year. Combine this with the creepy as hell skin grafted onto a robot and you have nightmare fuel for life.

Bio-neural gel packs here we come.

This has to be the smartest channel on YouTube. This guy accomplished some amazing feats!

Tatooine monks when?

No way this is real. The brain looks like a gyro rotisserie.

Technology

This is a most excellent place for technology news and articles.

Our Rules

- Follow the lemmy.world rules.

- Only tech related content.

- Be excellent to each another!

- Mod approved content bots can post up to 10 articles per day.

- Threads asking for personal tech support may be deleted.

- Politics threads may be removed.

- No memes allowed as posts, OK to post as comments.

- Only approved bots from the list below, to ask if your bot can be added please contact us.

- Check for duplicates before posting, duplicates may be removed