Hi All,

You may have seen the issues occurring on some lemmy.world communities regarding CSAM spamming. Thankfully it appears they have taken the right steps to reducing these attacks, but there are some issues with the way Lemmy caches images from other servers and the potential that CSAM can make its way onto our image server without us being aware.

Therefore we're taking a balanced approach to the situation, and try to take the least impactful way of dealing with these issues.

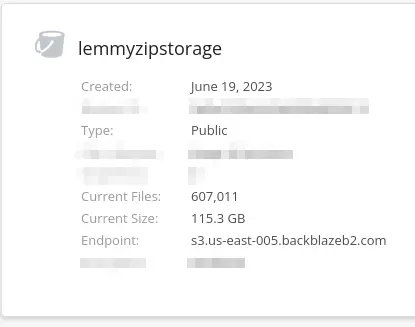

As you read this we're using AI (with thanks to @db0's fantastic lemmy-safety script) to scan our entire image server and automatically delete any possible CSAM. This does come with caveats, in that there will absolutely be false positives (think memes with children in) but this is preferable to nuking the entire image database or stopping people from uploading images altogether.

But this will at least somewhat guarantee (although maybe not 100%, but better than doing nothing) that CSAM is removed from the server.

We have a pretty good track record locally with account bannings (maybe one or two total) which is great, but if we notice an uptick in spam accounts we'll look to introduce measures to prevent these bots from creating spam if they slip past the registration process - ZippyBot can already take over community creation, which would stop any new account creating communities and only those with a high enough account score would be able to do so, for example.

We don't need (or want) to enable this yet, but just want you all to know we have tools available to help keep this instance safe if we need to use them.

Any questions please let me know.

Thanks all

Demigodrick

Thanks, still some tidying up to do :)

Edit: that ones fixed but let me know if anything else wonky comes up!

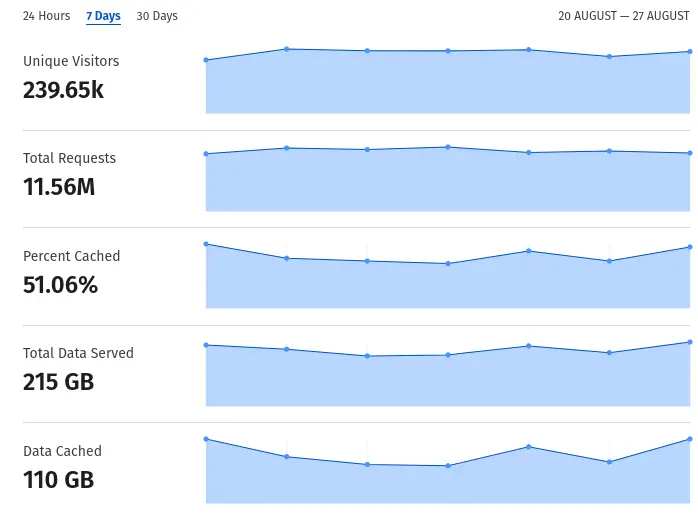

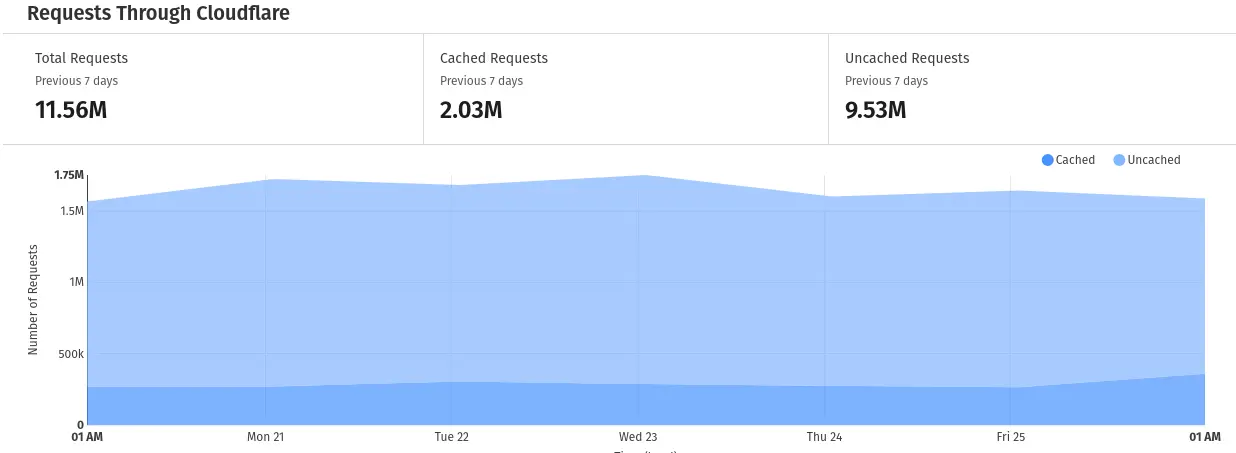

Glad its faster too!